Revisiting Fan 2 - Tanqua Karoo Basin

In a recent clear out of old data CDs and DVDs I happened across the original imagery and the DGPS data for a test study of part of Fan 2 in the Tanqua Fan complexes.

By David Hodgetts

Published On 2024-04-24

Last Edited : 2024-04-24

Revisiting Fan 2 - Tanqua Karoo Basin

In 2001, I was working as a Post Doctoral researcher on the NOMAD (Novel Modelled Analogue Data for more efficient exploitation of deepwater hydrocarbon reservoirs) project at the University of Liverpool as part of the STRAT Group (as in stratigraphy, not the guitar!). NOMAD was an ambitious initiative aimed at digitally mapping the Tanqua Fan complexes using differential global positioning systems (DGPS). We focused on the world-class outcrops of the Permian basin-floor turbidite fans located in the Karoo Basin, South Africa. These geological structures are notable for their expansive exposures, which extend approximately 40 kilometres in depositional dip and 20 kilometres in strike direction.

Our approach to digital data collection was comprehensive, employing various cutting-edge techniques. These included DGPS for mapping, surveying with laser total stations and laser rangefinders, both ground- and helicopter-based digital photography, and digital sedimentary outcrop logging, complemented by geophysical data obtained from boreholes. These diverse data sets were integrated to construct several detailed 3-D geological models of the study area.

During this period, I became interested in photogrammetry, particularly its potential for creating detailed digital outcrop models. Elevation data was already being routinely extracted from aerial imagery, and I proposed the idea that the same approach could be used on outcrops.

Figure 1: A photopanel of the original imagery for this study, with paper plates used for ground control points (GCPs). The lower mesh style display shows the position of the GCPs.

I selected a specific field area and began an experiment by placing paper plates across the outcrop (Figure 1) to act as ground control points (GCPs), which I then surveyed using DGPS. For the photogrammetric rectification, I utilised Erdas IMAGINE, a state-of-the-art photogrammetric software typically used with aerial imagery. The photogrammetric approaches at that time best worked with nadir view imagery, so while photographing the outcrop I tried to keep the camera pointing in the same direction. I then used rotated coordinate systems with the centre of the first image set at 0,0,0, and the camera looking down the Z axis. I rotated the model back to its real world orientation in post-processing using the GCPs.

While the process was quite challenging, the first outputs from this approach were promising, and the resulting models reflected the geometry of the outcrop. The resolution however, was low, and the amount of work required to build the models made the approach too time-consuming to be a practical field solution. This gave a taste of what was to come with photogrammetric techniques applied to outcrop data.

Figure 2: The original results from 2001, processed using state of the art techniques at the time.

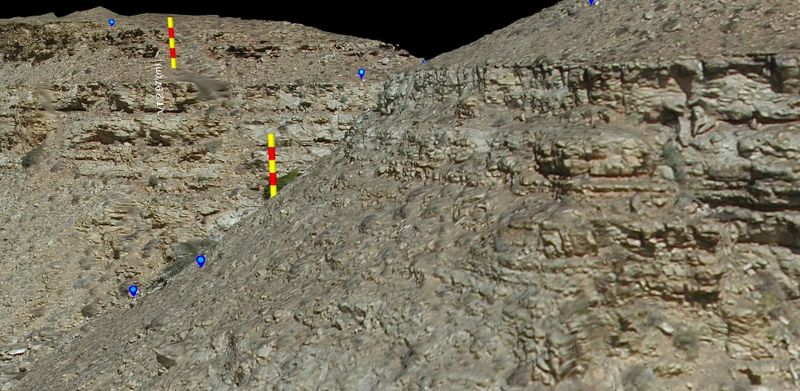

In a recent clear-out of old data CDs and DVDs, I found the original imagery and the DGPS data for a test study of part of Fan 2. I ran this through Metashape, imported the data into VRGS, identified the GCP locations from the paper plate markers and georeferenced and scaled the data using the VRGS georeferencing tools. Though the digital images are not up to modern standards, the resulting models clearly show the topography, and is a massive advancement on the original model results.

The original data processing suggested an accuracy of ±30cm. The reprocessed data has errors of less than 30cm compared to the GCPs but the point density is much greater, and there is far more detail in the outcrop geometry. The processing now is, of course, far easier.

This is another example of how old datasets can be given new life with reprocessing (see our Wadi Nukhul example on YouTube for another example).

Time has moved on, but keeping and re-processing old data sets with new approaches can lead to massive improvements in data quality.

References:

Latest Articles